bagging predictors. machine learning

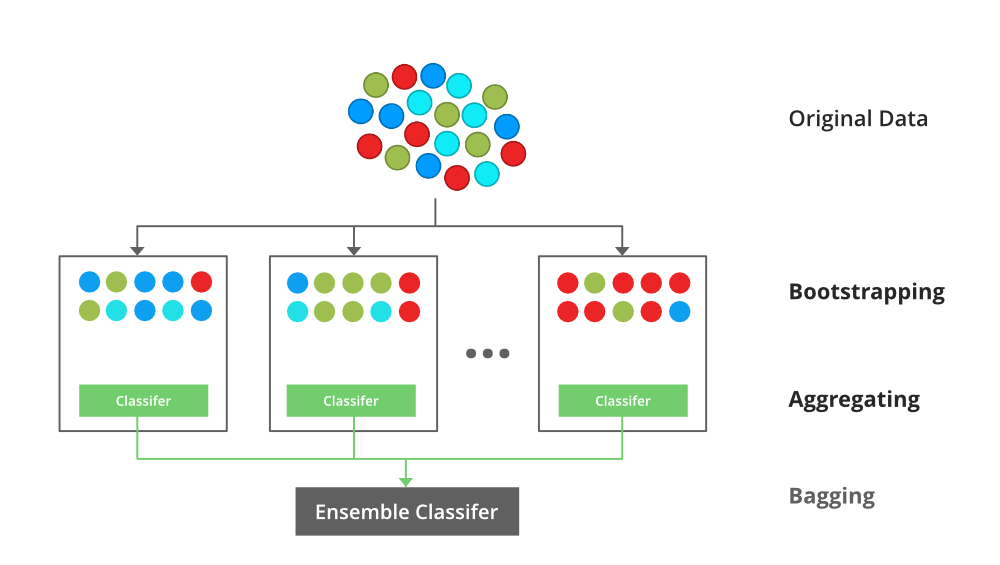

Bagging predictors is a method for generating multiple versions of a predictor and using these to get an aggregated predictor. 421 September 1994 Partially supported by NSF grant DMS-9212419 Department of Statistics University of California Berkeley California 94720.

Bagging Vs Boosting In Machine Learning Geeksforgeeks

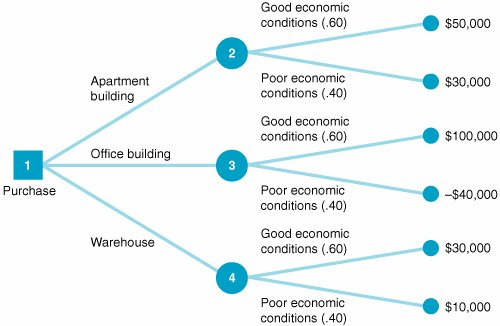

Important customer groups can also be determined based on customer behavior and temporal data.

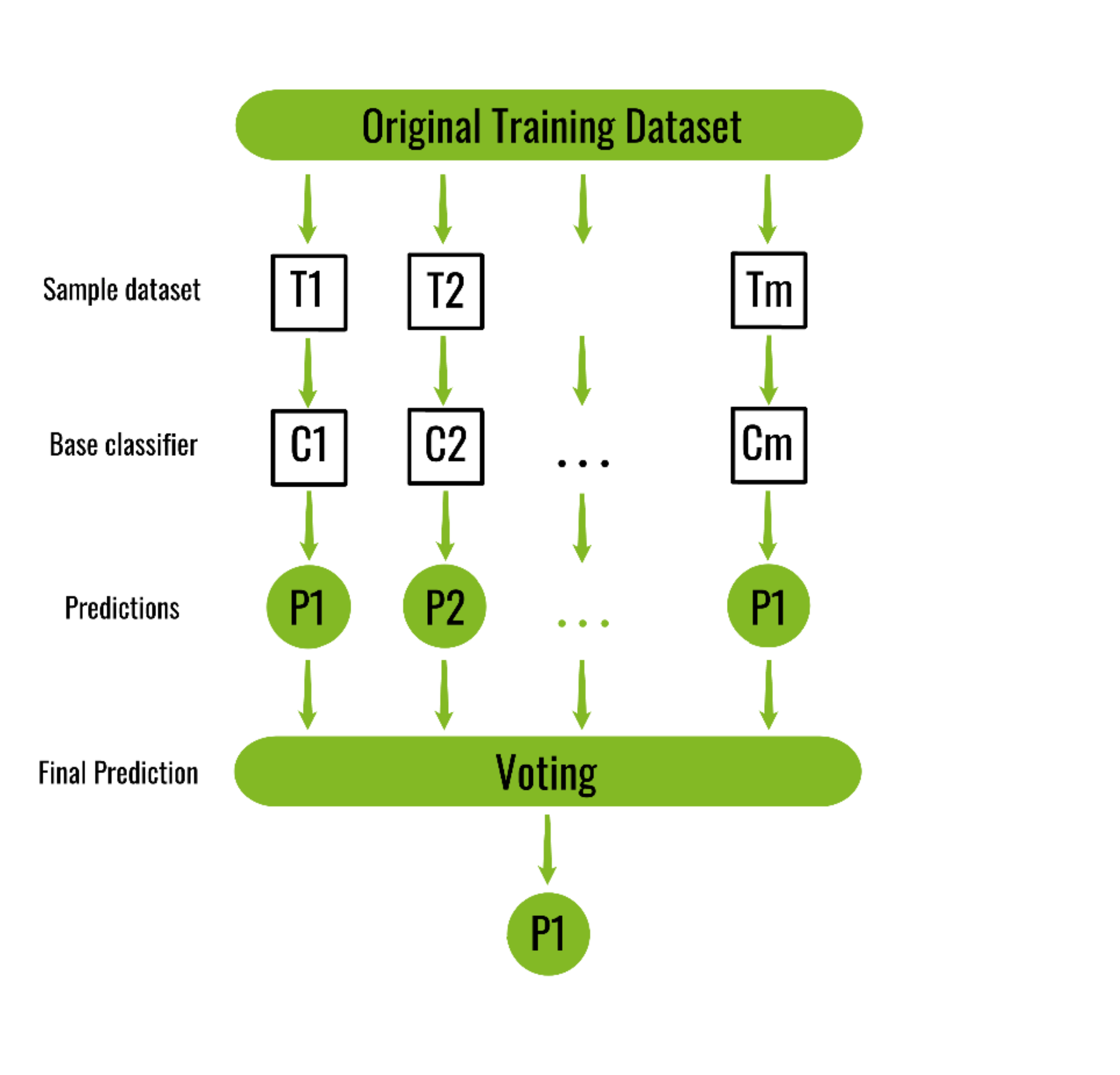

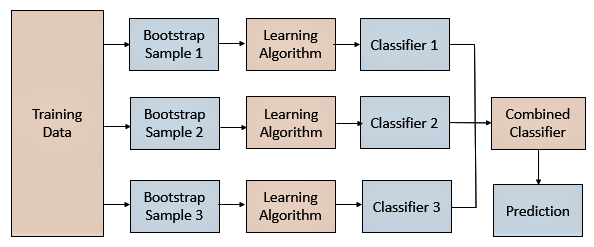

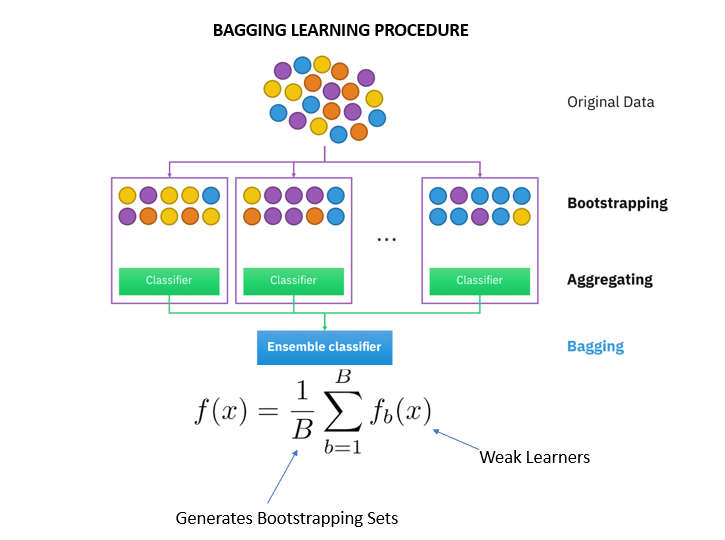

. The aggregation averages over the versions when predicting a numerical outcome and does a plurality vote when predicting a class. The vital element is the instability of the prediction method. The multiple versions are formed by making bootstrap replicates of the learning set and.

Up to 10 cash back Bagging predictors is a method for generating multiple versions of a predictor and using these to get an aggregated predictor. Bagging predictors is a metho d for generating ultiple m ersions v of a pre-dictor and using these to get an aggregated predictor. Manufactured in The Netherlands.

Boosting is usually applied where the classifier is stable and has a high bias. If perturbing the learning set can cause significant changes in the predictor constructed then bagging can improve accuracy. The meta-algorithm which is a special case of the model averaging was originally designed for classification and is usually applied to decision tree models but it can be used with any type of.

In this post you discovered the Bagging ensemble machine learning. Customer churn prediction was carried out using AdaBoost classification and BP neural network techniques. Regression trees and subset selection in linear regression show that bagging can give substantial gains in accuracy.

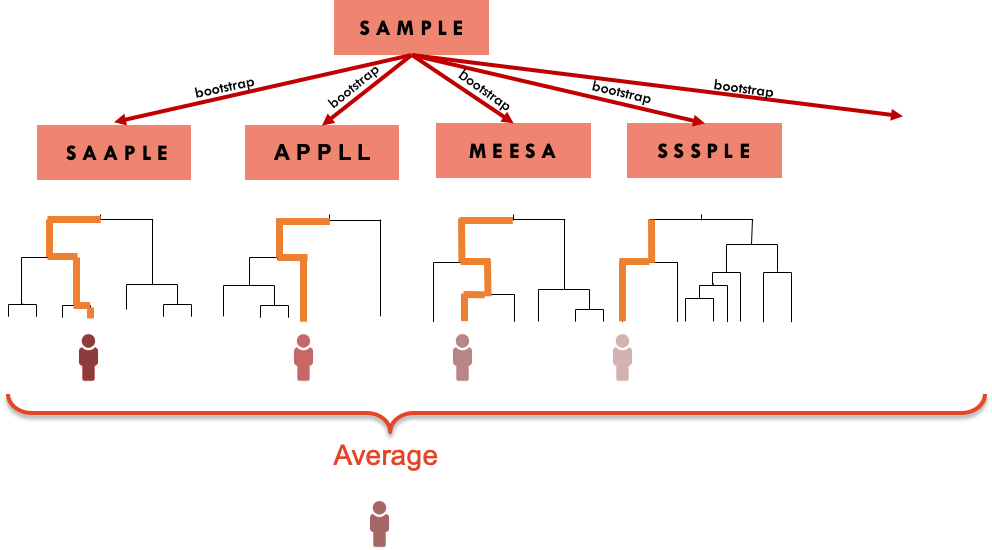

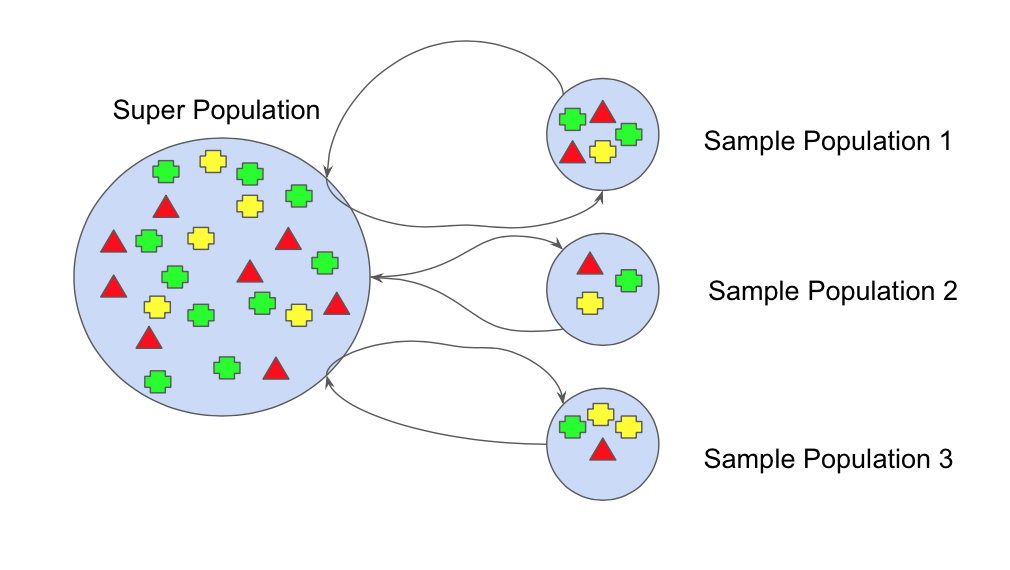

In Section 242 we learned about bootstrapping as a resampling procedure which creates b new bootstrap samples by drawing samples with replacement of the original training data. Machine learning 242123140 1996 by L Breiman Add To MetaCart. Statistics Department University of California Berkeley CA 94720 Editor.

The vital element is the instability of the prediction method. The combination of multiple predictors decreases variance increasing stability. Given a new dataset calculate the average prediction from each model.

Regression trees and subset selection in linear regression show that bagging can give substantial gains in accuracy. The multiple versions are formed by making bootstrap replicates of the learning set and using. The results show that the research method of clustering before prediction can improve prediction accuracy.

Bagging Predictors By Leo Breiman Technical Report No. Bootstrap aggregating also called bagging from bootstrap aggregating is a machine learning ensemble meta-algorithm designed to improve the stability and accuracy of machine learning algorithms used in statistical classification and regressionIt also reduces variance and helps to avoid overfittingAlthough it is usually applied to decision tree methods it can be used with any. We see that both the Bagged and Subagged predictor outperform a single tree in terms of MSPE.

Bagging is used for connecting predictions of the same. Bagging and pasting. Bagging Breiman 1996 a name derived from bootstrap aggregation was the first effective method of ensemble learning and is one of the simplest methods of arching 1.

This chapter illustrates how we can use bootstrapping to create an ensemble of predictions. The aggregation v- a erages er v o the ersions v when predicting a umerical n outcome and do es y pluralit ote v when predicting a class. Other high-variance machine learning algorithms can be used such as a k-nearest neighbors algorithm with a low k value although decision trees have proven to be the most effective.

If perturbing the learning set can cause significant changes in the predictor constructed then bagging can improve accuracy. Bagging predictors 1996. The subsets produced by these techniques are then used to train the predictors of an ensemble.

As machine learning has graduated from toy problems to real world. In Boosting the final prediction is a weighted average. Model ensembles are a very effective way of reducing prediction errors.

The process may takea few minutes but once it finishes a file will be downloaded on your browser soplease do not close the new tab. For a subsampling fraction of approximately 05 Subagging achieves nearly the same prediction performance as Bagging while coming at a lower computational cost. The results of repeated tenfold cross-validation experiments for predicting the QLS and GAF functional outcome of schizophrenia with clinical symptom scales using machine learning predictors such as the bagging ensemble model with feature selection the bagging ensemble model MFNNs SVM linear regression and random forests.

Bagging predictors is a method for generating multiple versions of a predictor and using these to get an. Bagging short for bootstrap aggregating creates a dataset by sampling the training set with replacement. The multiple versions are formed by making bootstrap replicates of the learning.

The ultiple m ersions v are formed y b making b o otstrap replicates of the. Bagging predictors is a method for generating multiple versions of a predictor and using these to get an aggregated predictor. The aggregation averages over the versions when predicting a numerical outcome and does a plurality vote when predicting a class.

Bagging is usually applied where the classifier is unstable and has a high variance. Machine Learning 24 123140 1996. They are able to convert a weak classifier into a very powerful one just averaging multiple individual weak predictors.

Bagging and Boosting are two ways of combining classifiers. Bagging and pasting are techniques that are used in order to create varied subsets of the training data. In Bagging the final prediction is just the normal average.

Blue blue red blue and red we would take the most frequent class and predict blue. Applications users are finding that real world. Bootstrap aggregating also called bagging is one of the first ensemble algorithms.

For example if we had 5 bagged decision trees that made the following class predictions for a in input sample. Problems require them to perform aspects of problem solving that are not currently addressed by. Machine Learning 24 123140 1996 c 1996 Kluwer Academic Publishers Boston.

The aggregation averages over the versions when predicting a numerical outcome and does a plurality vote when predicting a class. If perturbing the learning set can cause significant changes in the predictor constructed then bagging can improve accuracy. By clicking downloada new tab will open to start the export process.

Random Forest Algorithm In Machine Learning Great Learning

Bagging Machine Learning Through Visuals 1 What Is Bagging Ensemble Learning By Amey Naik Machine Learning Through Visuals Medium

2 Bagging Machine Learning For Biostatistics

Ensemble Methods In Machine Learning What Are They And Why Use Them By Evan Lutins Towards Data Science

Ensemble Methods In Machine Learning Bagging Subagging

An Introduction To Bagging In Machine Learning Statology

Bagging And Pasting In Machine Learning Data Science Python

Ml Bagging Classifier Geeksforgeeks

Bagging Classifier Instead Of Running Various Models On A By Pedro Meira Time To Work Medium

Bagging Bootstrap Aggregation Overview How It Works Advantages

Ensemble Learning Bagging And Boosting In Machine Learning Pianalytix Machine Learning

1 An Overview Of The Different Types Of Machine Learning On The Download Scientific Diagram

Ensemble Learning Explained Part 1 By Vignesh Madanan Medium

How To Use Decision Tree Algorithm Machine Learning Algorithm Decision Tree

Ensemble Methods In Machine Learning Bagging Versus Boosting Pluralsight

The Guide To Decision Tree Based Algorithms In Machine Learning

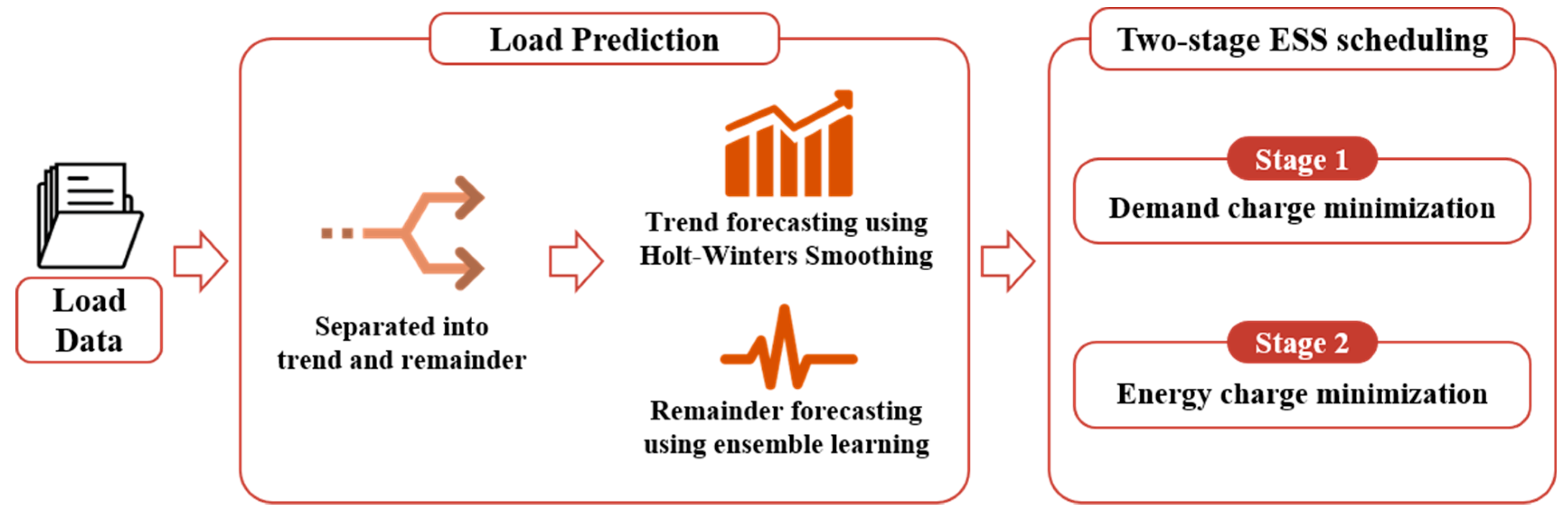

Processes Free Full Text Development Of A Two Stage Ess Scheduling Model For Cost Minimization Using Machine Learning Based Load Prediction Techniques Html

Schematic Of The Machine Learning Algorithm Used In This Study A A Download Scientific Diagram